(Source – World Tech Festival)

The Rise of AI Manipulation

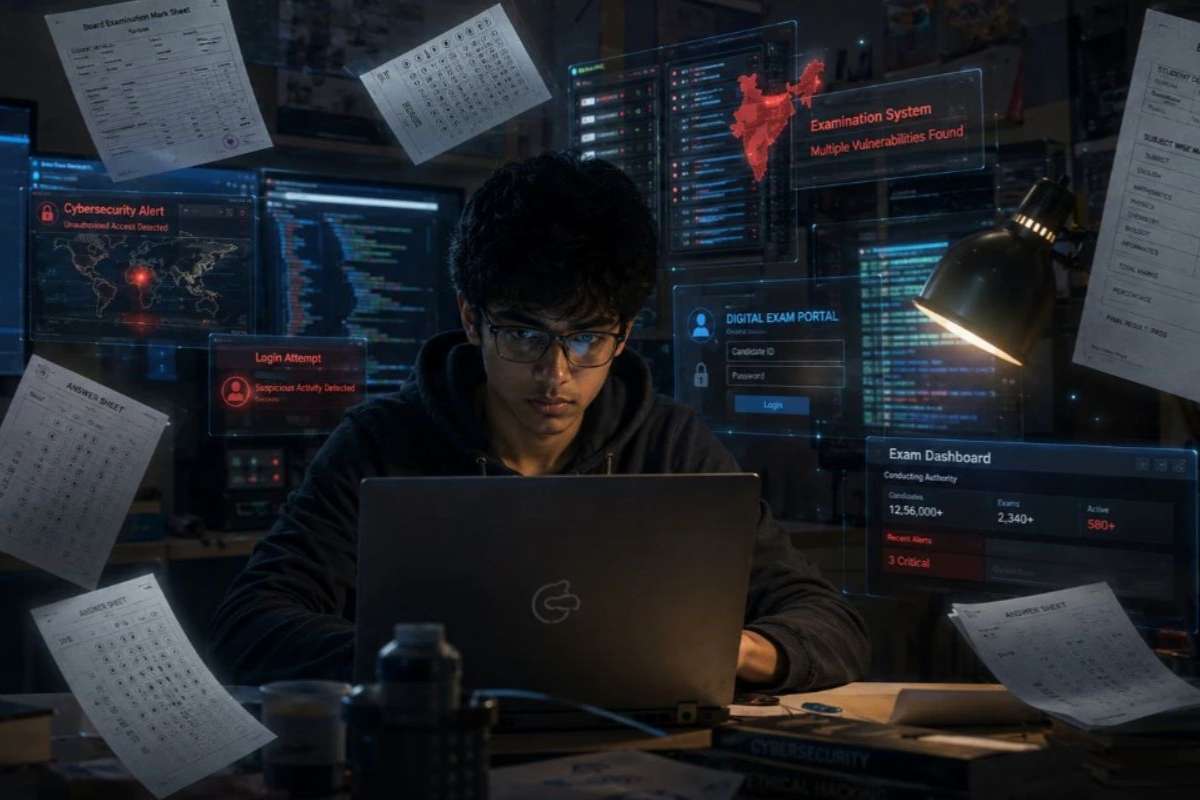

Artificial intelligence (AI) has reached a new frontier, and its power is more accessible than ever before. Manipulating recorded sounds and images isn’t a novel concept, but the ease with which individuals can alter information is a recent development. Generative AI, a subset of AI, enables the creation of hyper-realistic images, videos, and audio clips. This Deepfake technology, once reserved for experts, is now within reach of anyone with an internet connection. Professor Hany Farid of the University of California, Berkeley, emphasizes that the past year has seen a significant surge in the accessibility and affordability of this technology. With just a few bucks a month, anyone can upload snippets of someone’s voice and generate cloned speech from typed words, marking a concerning democratization of manipulation capabilities.

Maryland Case Highlights Dangers

Last week, a troubling incident unfolded in a Maryland high school, shedding light on the dark potential of AI manipulation. Authorities in Baltimore County revealed that the principal of Pikesville High, Eric Eiswert, became a victim of AI-generated deception. The school’s athletic director, Dazhon Darien, allegedly cloned Eiswert’s voice to create a fake recording containing racist and antisemitic comments. The doctored audio, initially circulated via email among teachers, quickly spread across social media platforms. The emergence of this recording coincided with Eiswert raising concerns about Darien’s job performance and possible financial misconduct, leading to the principal being placed on leave. As the incident unfolded, police guarded Eiswert’s residence while the school faced a barrage of angry calls and hate-fueled messages. Forensic analysis indicated traces of AI-generated content with human editing, signaling a worrying trend in the misuse of advanced Deepfake technology for nefarious purposes.

Addressing the Threat and Moving Forward

The prevalence of AI-generated disinformation, particularly in audio form, underscores the urgent need for proactive measures. While some companies implementing AI voice-generating technology claim to enforce prohibitions against harmful usage, self-regulation remains inconsistent. Suggestions for mitigating risks include requiring users to provide identifying information and implementing digital watermarks on recordings and images. Additionally, increased law enforcement action against criminal misuse of AI, coupled with enhanced consumer education, could serve as effective interventions. However, navigating the ethical complexities surrounding AI regulation presents challenges, with considerations for positive applications of the Deepfake technology, such as translation services. Achieving international consensus on ethical standards further complicates the landscape, given varying cultural attitudes toward AI usage. As society grapples with the implications of AI manipulation, addressing these multifaceted challenges is paramount to safeguarding trust and integrity in the digital age.