Assessing AI Models Risks

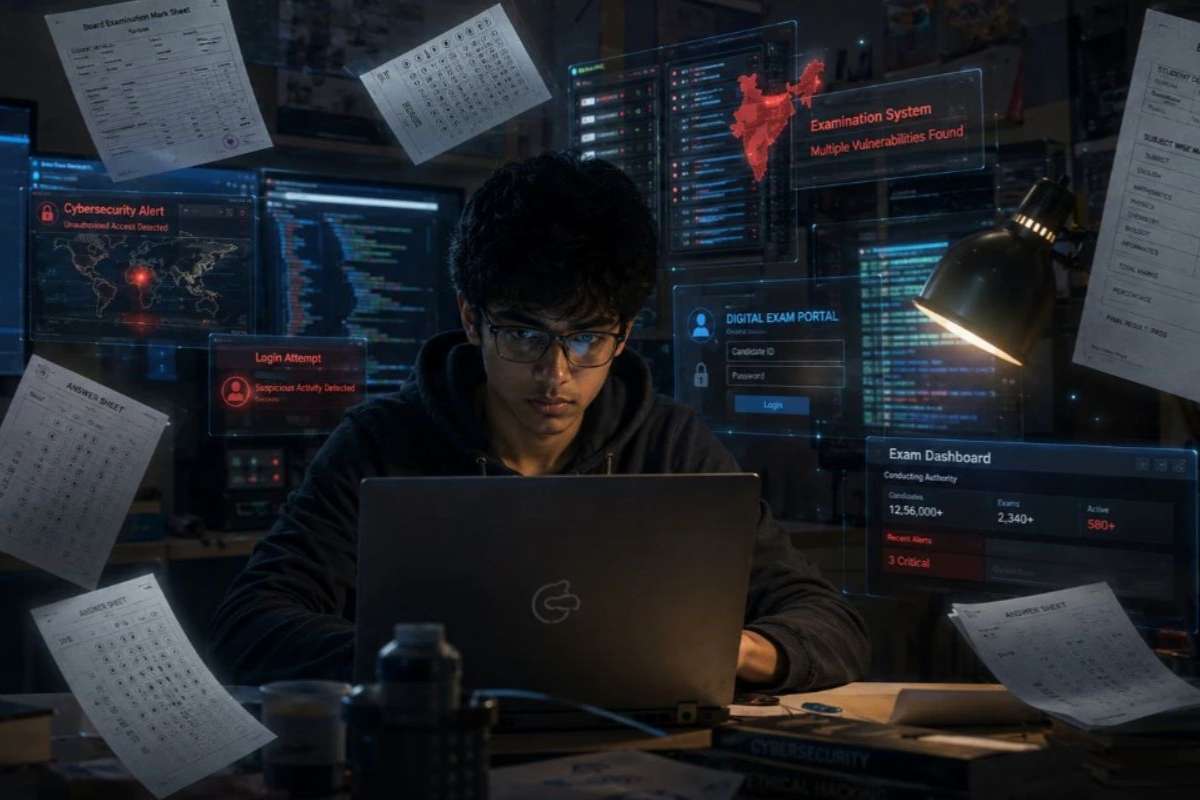

A groundbreaking study, released on Tuesday, introduces a novel approach to evaluate the potential dangers AI models pose. Led by researchers from Scale AI, a prominent AI training data provider, and the Center for AI Safety, a nonprofit organization, the study offers a technique to gauge whether AI systems harbor hazardous knowledge. This innovation comes amid rising concerns about the misuse of AI in cyberattacks and the development of bioweapons.

The study enlisted the expertise of over 20 specialists in biosecurity, chemical weapons, and cybersecurity to devise a set of questions. These questions, designed to probe an AI’s capability to contribute to the creation and deployment of weapons of mass destruction, formed the basis of the evaluation process. Notably, the research team also unveiled a method dubbed the “mind wipe,” aimed at removing potentially harmful knowledge from AI systems while preserving their core functionalities.

Unveiling the “Mind Wipe” Technique

Dan Hendrycks, the executive director at the Center for AI Safety, lauds the “mind-wipe” technique as a significant leap forward in ensuring AI safety. Unlike conventional safety measures, this approach offers a more robust mechanism to purge detrimental knowledge from AI models, potentially averting their exploitation for nefarious purposes. The urgency for such advancements is underscored by the AI Executive Order signed by U.S. President Joe Biden in October 2023, mandating actions to mitigate the risks associated with AI-enabled threats.

Previous attempts to control AI outputs have proven ineffective, with existing techniques susceptible to circumvention. Alexandr Wang, the CEO of Scale AI, highlights the absence of standardized evaluations to assess the relative dangers posed by different AI models. The new study addresses this gap by introducing a comprehensive questionnaire, meticulously crafted to evaluate an AI’s propensity for contributing to various forms of harm, from bioterrorism to cyber warfare.

The Path Forward

The study’s findings shed light on the imperative for AI developers to adopt stringent safety measures, particularly in the realm of unlearning techniques. By leveraging methods like the proposed “mind wipe,” AI companies can mitigate the risks inherent in their models, thereby safeguarding against potential misuse. However, experts caution that while the efficacy of such techniques is promising, ensuring AI safety demands a multifaceted approach.

Miranda Bogen, director of the AI Governance Lab at the Center for Democracy and Technology, emphasizes the need for comprehensive safety protocols beyond mere evaluation benchmarks. Despite the optimism surrounding the “mind-wipe” technique, uncertainties persist regarding its ability to eradicate hazardous knowledge entirely. Nonetheless, proponents believe that initiatives like these represent crucial steps toward enhancing AI safety and curbing the proliferation of harmful applications.

In the evolving landscape of AI models, where advancements bring both promise and peril, initiatives that prioritize safety and accountability are paramount. As the global community grapples with the ethical dimensions of AI development, innovative solutions like the “mind-wipe” technique offer a glimpse of hope in navigating these complex challenges.