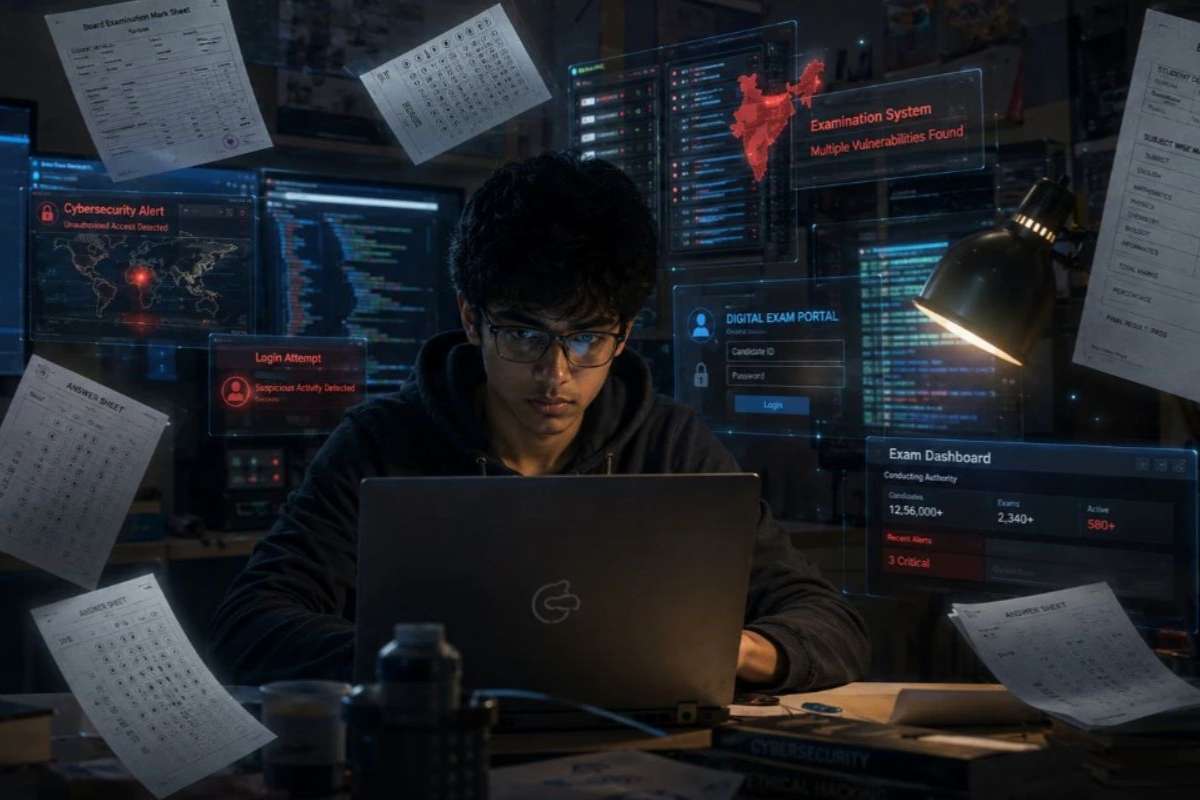

A New Era of Cyber Threats

Cyberattacks have become a common occurrence, striking roughly every 39 seconds. From phishing to ransomware, the forms of AI-powered cybercrime are diverse, but their impact is uniformly destructive. Forecasts for 2024 predict cybercrime could cost the global economy $9.5 trillion. The integration of AI by cybercriminals is exacerbating the problem, enabling more sophisticated and large-scale attacks.

A report by RiverSafe has surveyed Chief Information Security Officers (CISOs) across the UK to understand the current cyber threat landscape and how businesses are countering the evolving menace of AI-powered cybercrime. Their insights reveal the pressing challenges and strategic responses necessary to combat these digital threats.

The Evolving Threat Landscape

AI is significantly altering the cyber threat environment. One in five CISOs considers AI the most significant threat, as its advancing capabilities provide cybercriminals with powerful tools. The National Cyber Security Centre (NCSC) notes that AI is already being used extensively in malicious activities, increasing the frequency and severity of cyberattacks, including ransomware.

AI enhances traditional cyberattacks by making them harder to detect. For instance, AI can modify malware to evade antivirus software. Once detected, AI can quickly generate new variants, enabling the malware to continue its operations undetected, stealing sensitive data and spreading across networks.

Beyond malware, AI aids in bypassing firewalls, generating convincing phishing emails, and creating deepfakes to deceive victims into divulging sensitive information. The use of AI in these areas underscores the need for advanced cybersecurity measures to keep pace with evolving threats.

Mitigating Internal and External AI Threats

The misuse of AI is not limited to external cybercriminals. Employees, often inadvertently, can also compromise security by using AI tools that may expose company data. According to the RiverSafe report, one in five security leaders experienced a data breach due to employees sharing sensitive information with AI tools like ChatGPT.

Generative AI tools, while useful for streamlining tasks, pose a significant risk when employees input proprietary data without understanding its potential misuse. A major data breach in 2023 involving ChatGPT highlighted these risks, revealing the payment details and personal information of subscribers.

In response, some companies have banned the use of generative AI tools. However, these measures are seen as temporary. A more sustainable approach involves educating employees and implementing robust policies to safely integrate AI tools into the workplace. This approach allows businesses to benefit from AI while minimizing security risks.

The Rising Threat of Insider Incidents

Insider threats are a major concern for cybersecurity, with 75% of CISOs viewing them as a greater risk than external attacks. Human error is a leading cause of data breaches, often due to ignorance or unintentional mistakes. Insider threats are challenging to mitigate because they can originate from employees, contractors, third parties, and anyone with legitimate access to systems.

Despite the recognized risks, 64% of CISOs reported insufficient technology to protect against insider threats. Over the past five years, insider threat incidents have surged by 47%, highlighting a significant gap in cybersecurity defenses.

Several factors contribute to the increase in insider threats. The digital transformation has led to more complex IT environments and interconnected systems, complicating security measures. Additionally, expanding digital supply chains introduce new vulnerabilities, with trusted partners responsible for a substantial portion of insider incidents.

The growing threat of AI in cybersecurity necessitates an overhaul of current security strategies. Businesses must update their policies, practices, and employee training to effectively address the risks posed by both internal and external AI-powered cybercrime. The landscape of cyber threats is rapidly changing, and organizations must stay ahead to protect their valuable digital assets.